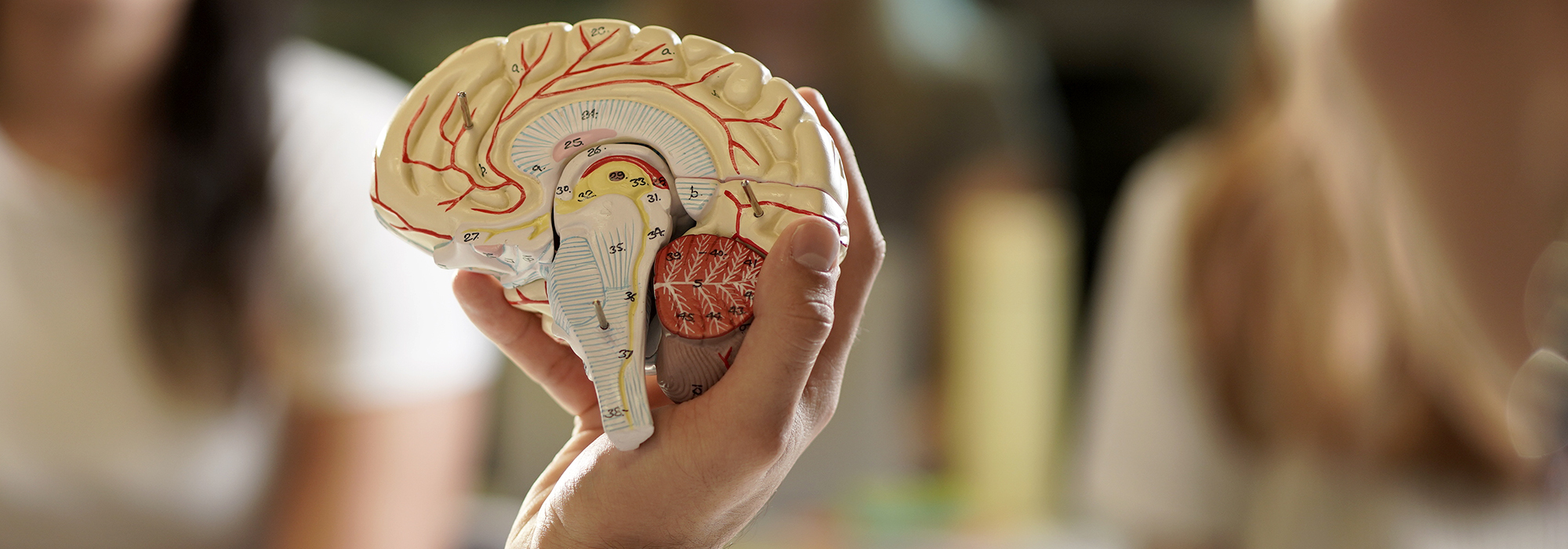

The human mind presents some of science’s greatest challenges, and an understanding of behaviour is key to solving some of humanity’s most pressing problems. Join the School of Psychology to understand human nature and explain both the strange and everyday behaviour you see around you.

We are the recognised number one School of Psychology in New Zealand in terms of research quality and output, and you will be taught by our academic staff, each of whom works at the forefront in their respective area.

Aotearoa's only Bachelor of Psychology

Help solve some of Aotearoa’s most crucial problems. Study Psychology with us in 2024.

Find out more

Psychology

Study Psychology to understand behaviour—how we think, feel, and act—and how those processes can go wrong. Learn how our biology and our environment interact to make us who we are.

Find out moreAvailable subjects

- Psychological Science

- Brain Sciences and Mental Health

- Clinical Psychology

- Cognitive and Behavioural Neuroscience

- Cognitive Science

- Criminal Justice and Psychology

- Cross-Cultural Psychology

- Educational Psychology

- Forensic Psychology

- Health Psychology

- Māori Psychology

- Mental Health: Principles and Applications

- Work and Organisational Psychology

2024, Trimester 1 - Tutor and Teaching Assistant Roles

Applications are now open! The School of Psychology is currently seeking applicants for several Tutor and Teaching Assistant roles. Applications close on 15 January 2024 at 10am. Late applications may be considered.

Apply for one of these roles

Support the School of Psychology's Research

Support student research projects and events for the community around psychology related topics to grow everyone’s knowledge and understanding of human behaviour.

Find out more about donating